网站公告

more- Exactly How ... 25-03-23 15:40

- Just How To ... 25-03-23 15:39

- How To Regis... 25-03-23 15:30

- How To Regis... 25-03-23 15:13

Getting The Best Software Program To Energy Up Your Deepseek

EdytheImlay22778 2025.03.21 00:50 查看 : 2

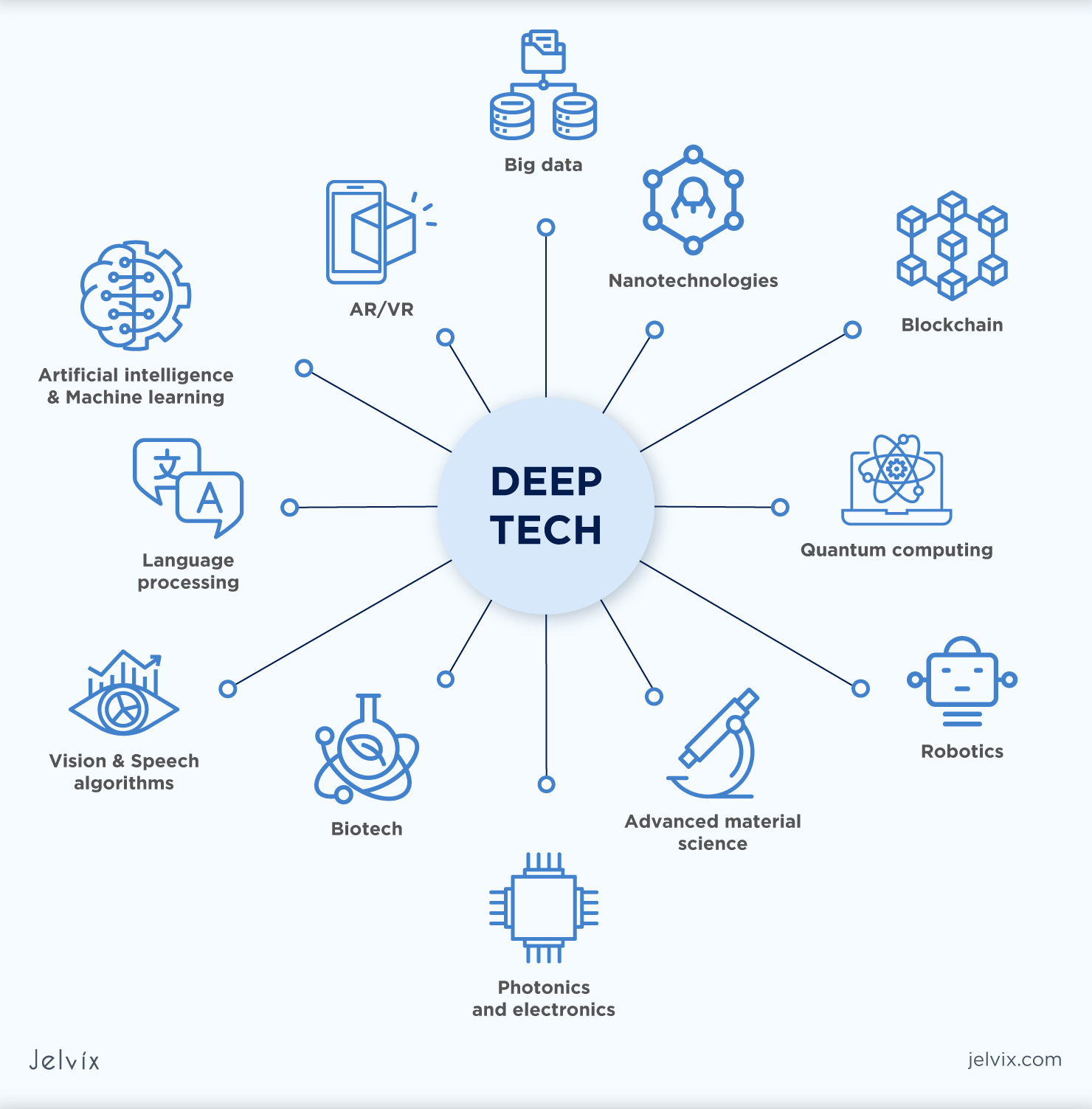

Shares of AI chipmaker Nvidia (NVDA) and a slew of other stocks associated to AI bought off Monday as an app from Chinese AI startup DeepSeek boomed in popularity. You can even configure the System Prompt and choose the popular vector database (NVIDIA Financial Data, on this case). Not only does the nation have entry to DeepSeek, however I believe that DeepSeek’s relative success to America’s leading AI labs will lead to a further unleashing of Chinese innovation as they understand they will compete. This means that DeepSeek probably invested more closely within the coaching process, while OpenAI may have relied extra on inference-time scaling for o1. To clarify this process, I have highlighted the distillation portion within the diagram under. As you identified, they've CUDA, which is a proprietary set of APIs for operating parallelised math operations. This model set itself apart by attaining a substantial improve in inference velocity, making it one of many fastest models within the sequence. 1. Inference-time scaling requires no additional training but increases inference costs, making large-scale deployment costlier because the number or customers or query quantity grows.

Shares of AI chipmaker Nvidia (NVDA) and a slew of other stocks associated to AI bought off Monday as an app from Chinese AI startup DeepSeek boomed in popularity. You can even configure the System Prompt and choose the popular vector database (NVIDIA Financial Data, on this case). Not only does the nation have entry to DeepSeek, however I believe that DeepSeek’s relative success to America’s leading AI labs will lead to a further unleashing of Chinese innovation as they understand they will compete. This means that DeepSeek probably invested more closely within the coaching process, while OpenAI may have relied extra on inference-time scaling for o1. To clarify this process, I have highlighted the distillation portion within the diagram under. As you identified, they've CUDA, which is a proprietary set of APIs for operating parallelised math operations. This model set itself apart by attaining a substantial improve in inference velocity, making it one of many fastest models within the sequence. 1. Inference-time scaling requires no additional training but increases inference costs, making large-scale deployment costlier because the number or customers or query quantity grows.

SFT and solely extensive inference-time scaling? These distilled models function an fascinating benchmark, showing how far pure supervised advantageous-tuning (SFT) can take a mannequin without reinforcement learning. Interestingly, the outcomes suggest that distillation is far more effective than pure RL for smaller fashions. A few years again, if you looked for film times, your search engine would provide the hyperlink to a neighborhood movie theater as the highest end result (along with paid-search outcomes which were clearly marked as such). The outcomes of this experiment are summarized in the desk below, the place QwQ-32B-Preview serves as a reference reasoning model primarily based on Qwen 2.5 32B developed by the Qwen group (I think the training particulars have been by no means disclosed). The DeepSeek team examined whether or not the emergent reasoning conduct seen in DeepSeek-R1-Zero may additionally seem in smaller models. We collaborated with the LLaVA staff to integrate these capabilities into SGLang v0.3. DeepSeek's pure language processing capabilities make it a strong software for academic purposes. DeepSeek's Mixture-of-Experts (MoE) architecture stands out for its means to activate simply 37 billion parameters during duties, despite the fact that it has a complete of 671 billion parameters. However, what stands out is that DeepSeek-R1 is more efficient at inference time.

1. Smaller models are more environment friendly. 4. Distillation is a lovely approach, particularly for creating smaller, more efficient models. This aligns with the concept that RL alone might not be sufficient to induce sturdy reasoning skills in fashions of this scale, whereas SFT on excessive-quality reasoning data could be a more practical strategy when working with small models. 2. DeepSeek-V3 educated with pure SFT, much like how the distilled models have been created. As we will see, the distilled fashions are noticeably weaker than DeepSeek-R1, but they're surprisingly robust relative to DeepSeek-R1-Zero, regardless of being orders of magnitude smaller. This comparability supplies some additional insights into whether pure RL alone can induce reasoning capabilities in fashions a lot smaller than DeepSeek-R1-Zero. The desk under compares the efficiency of these distilled fashions against other in style models, as well as DeepSeek-R1-Zero and DeepSeek-R1. And it’s impressive that DeepSeek has open-sourced their models below a permissive open-supply MIT license, which has even fewer restrictions than Meta’s Llama fashions. I’d say it’s roughly in the same ballpark. In fact, the SFT data used for this distillation course of is similar dataset that was used to train DeepSeek-R1, as described within the previous part. Surprisingly, DeepSeek additionally launched smaller fashions educated via a course of they name distillation.

1. Smaller models are more environment friendly. 4. Distillation is a lovely approach, particularly for creating smaller, more efficient models. This aligns with the concept that RL alone might not be sufficient to induce sturdy reasoning skills in fashions of this scale, whereas SFT on excessive-quality reasoning data could be a more practical strategy when working with small models. 2. DeepSeek-V3 educated with pure SFT, much like how the distilled models have been created. As we will see, the distilled fashions are noticeably weaker than DeepSeek-R1, but they're surprisingly robust relative to DeepSeek-R1-Zero, regardless of being orders of magnitude smaller. This comparability supplies some additional insights into whether pure RL alone can induce reasoning capabilities in fashions a lot smaller than DeepSeek-R1-Zero. The desk under compares the efficiency of these distilled fashions against other in style models, as well as DeepSeek-R1-Zero and DeepSeek-R1. And it’s impressive that DeepSeek has open-sourced their models below a permissive open-supply MIT license, which has even fewer restrictions than Meta’s Llama fashions. I’d say it’s roughly in the same ballpark. In fact, the SFT data used for this distillation course of is similar dataset that was used to train DeepSeek-R1, as described within the previous part. Surprisingly, DeepSeek additionally launched smaller fashions educated via a course of they name distillation.

While GPT-4o can support a much bigger context size, the fee to course of the input is 8.92 occasions larger. By leveraging the DeepSeek-V3 model, it may reply questions, generate creative content material, and even help in technical research. Yes, DeepSeek-V3 can understand and generate technical documentation, provided the input is evident and detailed. Developers worldwide can contribute, improve, and optimize models. It’s additionally fascinating to note how effectively these models carry out in comparison with o1 mini (I believe o1-mini itself is likely to be a equally distilled version of o1). For fear that the same tips may work against different widespread giant language models (LLMs), nevertheless, the researchers have chosen to maintain the technical details beneath wraps. While the two corporations are both creating generative AI LLMs, they have totally different approaches. However, within the context of LLMs, distillation doesn't necessarily observe the classical knowledge distillation approach utilized in deep learning. Instead, here distillation refers to instruction nice-tuning smaller LLMs, reminiscent of Llama 8B and 70B and Qwen 2.5 models (0.5B to 32B), on an SFT dataset generated by bigger LLMs. SFT is the important thing approach for building high-efficiency reasoning models. SFT (approach 3) with inference-time scaling (method 1). This is likely what OpenAI o1 is doing, besides it’s in all probability based mostly on a weaker base model than DeepSeek-R1, which explains why DeepSeek-R1 performs so well while remaining comparatively low-cost at inference time.

If you enjoyed this post and you would certainly such as to get more facts pertaining to deepseek français kindly see our own site.

?? 0

Copyright © youlimart.com All Rights Reserved.鲁ICP备18045292号-2 鲁公网安备 37021402000770号